A Solution for All Data Storage Woes?

By Peter Monaghan

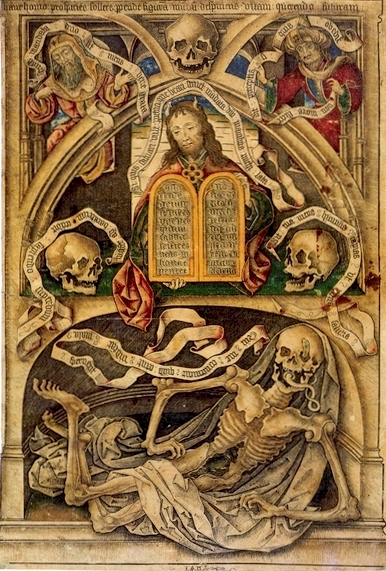

Allegory of the Transience of Life (ca. 1480-90), an engraving on vellum in the British Museum by the engraver Master I. A. M. of Zwolle was collected by Columbus, en route to not collecting everything ever printed. Image: British Museum.

When Hernando Colón dreamed in the early 16th century of collecting all the world’s knowledge in one place, he could not have imagined that one day it might all fit within a space the size of a sailor’s trunk.

But thanks to stunning advances in storage science, perhaps it could.

Colón had a lot to live up to: his father was Christopher Columbus, who sailed the oceans blue in search of new knowledge, and plunder. But Colón was no man of action: he was an obsessive bibliophile. Rather than navigate across tempest-tossed seas towards dauntingly distant frontiers, Junior hit on the idea of bringing the world to him: he would create a Library of Everything.

Gathering all available information in one place was an enormously fanciful idea in Colón’s day; the technology of today, however – or, at least, of some fast-approaching tomorrow – promises to make possible the use of a surprising medium as a phenomenally compact data-storage option: DNA (deoxyribonucleic acid), the microscopic, self-replicating material that carries the genetic instructions that shape all living organisms.

Storing data in DNA promises to be so compact that, to gather up all the world’s information into one space, even a duffel bag might suffice.

If you already know about this — that DNA, the blueprint of life, could potentially solve the problems of long-term mass storage capacity that keep archivists awake at night — then the information in this article may be old hat, for you.

But if you haven’t heard about the flurry of activity around making DNA a viable medium of storage, what follows here could leave you thinking: “This could solve everything!”

It surely could be “the future of movie archiving,” according to Jean Bolot, Technicolor’s vice-president of research and innovation, echoing an increasing number of moving-image insiders.

But using DNA as a storage medium has potentially huge implications for archivists of all kinds, not just moving-image archivists. A dynamic new solution to storage challenges would be a hugely welcome thing, because archivists increasingly wonder how all the data that already exists or is currently being generated will find secure storage.

Of course, moving-image data is small fry compared with what scientists in many fields are now generating. The era of Big Data has taken hold, with data sets so large and so rapidly expanding that existing storage methods have no hope of keeping up with them. A typical estimate, published in Nature magazine in 2016, held that by just 2020, the “global digital archive” (including just digitized information, not the whole corpus of information that the Hernando Colóns of the world would like at their fingertips) will have reached 44 trillion gigabytes, a tenfold increase over the 2013 level; and, by 2040 the level will be such that “if everything were stored for instant access in, say, the flash memory chips used in memory sticks, the archive would consume 10-100 times the expected supply of microchip-grade silicon.”

Holding back the deluge with DNA

The idea of using DNA to combat this deluge has an origin tale. Two, really.

In 1988, Joe Davis, an artist, collaborated with Harvard scientists to map digital data onto the four base pairs of DNA — its A, C, G, and T. Mathematicians like Donald Knuth then detailed the nature and requirements for computing of “quaternary numeral system” mathematics, and in 2012 a Harvard group proposed a design for DNA-based storage.

In Europe, a similar approach was brewing. One evening in 2011, while attending a conference in Hamburg, Germany, Cambridge University’s Nick Goldman and his colleagues at the European Bioinformatics Institute were enjoying a few beers but were also fretting about the oncoming torrents of biological data. It was their job to fret, because they are bioinformaticists — people who devise ways to efficiently store and retrieve data relating to biological phenomena.

Worsening their woes was their knowledge that existing storage media such as hard drives and SD cards all deteriorate, particularly when left just lying around. In fact, according to BBC sound whiz Andy Moore, speaking [at 12:27] on one of the radio network’s now-many items about DNA storage, data is best preserved by being continually used. Imagine everything the BBC has ever created and preserved streaming, somehow, all the time.

In general, Moore says, when it comes to digitally stored information — audio information, in his line of work — “nobody really knows how it behaves when you don’t use it for a long period of time. It’s fine when you use it every day. If you don’t use something, even for a matter of weeks, there’s no guarantee how it will behave when it’s powered up again.”

He wonders, in fact, whether the best way to keep digitized stored data usable might be: “Just keep broadcasting it.” But that’s a solution tangled in considerable practical challenges. He imagines a series of solar-powered satellites that would continually orbit and transmit all the data; but that would not appear to be in the cash-strapped BBC’s plans.

Contemplating all this, Goldman and his colleagues had a collective brainwave: If the challenge was to store and retrieve ever-increasing orders of magnitude of data, and preserve them long into the future, why not use a medium that could enable much smaller storage and vastly greater longevity than current methods such as hard drives, magnetic tapes, and the like?

Contemplating all this, Goldman and his colleagues had a collective brainwave: If the challenge was to store and retrieve ever-increasing orders of magnitude of data, and preserve them long into the future, why not use a medium that could enable much smaller storage and vastly greater longevity than current methods such as hard drives, magnetic tapes, and the like?

And wasn’t that potential medium to be found at a molecular level? Like, in DNA?

Could a way be devised to use DNA for coding information?

Turns out that devising ways was not that difficult – apparently not, because the origin legend holds that the beer-imbibing bioinformaticists had covered paper napkins with specifications before the bar closed for the night.

Their solution or others quite like it have now been used by a variety of scientists and computer engineers. The core of the approach is to encode digital data into fragments of synthesized DNA. Digital data, which is in the form of zeros and ones, is transcribed into strands of the four chemicals that form the laddered double-helix structure of DNA: adenine, cytosine, guanine, and thymine (A, C, G, T). The data can then be read (turned back into digital code) using DNA sequencing (CRISPR) such as is common in genetic fingerprinting — in sequencing genomes, and similar tasks.

Over the last 10 to 15 years, more and more sophisticated approaches have been developed to ensure that data retrieved matches data encoded. These fail-safe techniques (approaches to error tolerance through built-in redundancy in the information’s storage structure — detailed in Nature) entail, for example, creating multiple copies by “feeding” the encoded strands of synthetic DNA to, e.g., E. coli bacteria, which (as part of their defense system) incorporate them into their own genetic codes — their genomes — and multiply them.

In addition, computer scientists and engineers have developed ways to tag stored data with codes that identify the placement, sequence, and other relevant characteristics of points of data, such as its relations with other points.

Such techniques allow encoded and stored data to be read back into computer code with impressive and increasing accuracy, even though early proof-of-concept data-DNA is indistinguishable to the naked eye from the droplets of water that hold it.

How that helps moving-image archivists

The usefulness of such approaches, for moving-image archivists, is that coding can reflect, for example, the positions, timing, and shades of pixels in images. Some of the early proofs-of-concept of DNA storage have made use of that capability, not just to demonstrate that the approach can work, but also to create a publicity splash.

Between 1999 and 2010, pioneers of DNA information storage encoded into microbes a sentence from Genesis, Shakespeare’s sonnets, a PDF of a paper by James Watson and Francis Crick about the structure of DNA, and part of Martin Luther King Jr.’s “I Have a Dream” speech; they also stored the lyrics of “It’s a Small World” and Einstein’s E=mc² in bacterial genomes, and encoded a quotation from James Joyce into a lab-constructed genome.

Moving-image applications have similarly progressed, with similar showmanship. Seth Shipman of Harvard Medical School, for example, encoded in DNA a series of stills of a galloping horse (above) created in the late 18th century by Eadweard Muybridge, often considered the “father of motion pictures.”

He and his colleagues were able to do that with stunning compactness: in one single gram of DNA, they stored about 700 terabytes of data.

Working with Harvard’s technology, Technicolor in 2016 encoded in DNA a million copies of a short film. The company’s vice-president of research and innovation, Jean Bolot, told The Guardian: “This, we believe, is what the future of movie archiving will look like.”

It didn’t look like much, at all – and that was part of the point. He displayed a tiny vial of water that was far greater in volume than the million copies within it of the 1902 French silent, A Trip to the Moon, a pioneer of the use of visual effects.

Bolot provided the now-obligatory everyday image of compactness: the archives of all Hollywood studios, he said, could fit within a space the size of a Lego brick.

Almost all the early developers of the capability have, it seems, grasped the importance of selling the techniques to a larger public and potentially grant-awarding agencies. Yaniv Erlich and Dina Zielinski of the New York Genome Center and Columbia University encoded a silent movie, a computer operating system, a photo, a scientific paper, a computer virus, and an Amazon gift card.

Despite these attention-grabbing efforts, the storage capabilities of DNA remain little publicly noticed, even if companies like Technicolor and Microsoft are hard at work on them, largely shut away from the curious eyes of university-based researchers.

Some researchers want to develop means of having living cells of, for example, a cancer store data about themselves that can be retrieved and used for therapies. Shipman told Atlantic Monthly last year: “We want cells to go out and record environmental or biological information that we don’t already know.” We might one day all walk around with data-detection, -analysis, and –storage methods and means programmed into us at a cellular level.

That’s to say that CRISPR techniques have potential applications in medical-detection systems and other fields that may go far beyond what moving-image archivists need – at least, what they need in dealing with the current challenge of securing films and the like. But all the imaginable applications share an interest in some of the qualities and capabilities of DNA storage.

Deficits, but also large positives

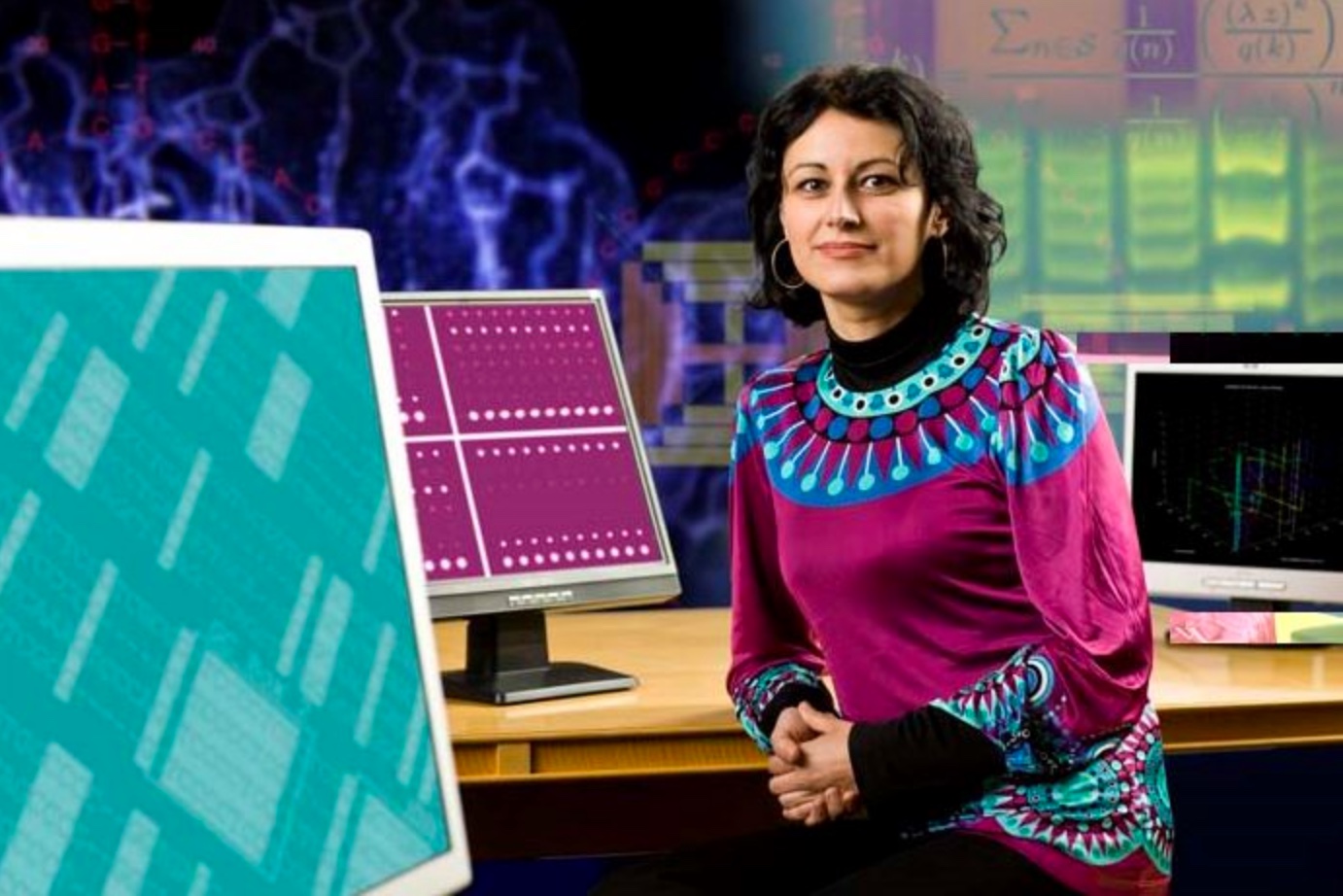

The benefits of DNA storage are not in doubt, says Olgica Milenkovic, a professor of electrical and computer engineering at the Coordinated Science Lab at the University of Illinois at Urbana-Champaign (right). She and her colleagues have noted such positives as:

The benefits of DNA storage are not in doubt, says Olgica Milenkovic, a professor of electrical and computer engineering at the Coordinated Science Lab at the University of Illinois at Urbana-Champaign (right). She and her colleagues have noted such positives as:

– it provides a means of very dense storage — in fact, the densest to date;

– it provides essentially permanent storage, particularly if kept cold, dry, and away from light and radiation; a proof of this capability is that remains of animals dead for more than 5,000 years still yield intact, analyzable DNA;

– information stored in DNA will always be readable because medical scientists are surely always going to pursue better and better means of observing and manipulating the “hard-disc drive of life”;

– DNA storage, unlike optical and magnetic technologies, does not require electrical supply to maintain data integrity;

– the costs of DNA synthesis and sequencing will likely plummet in the next decade or two.

The emerging pluses of the approach address some of the reservations Nick Goldman spoke about to the BBC’s Inside Science program in 2015. He said that a useful data-storage technique would surely emerge from the early proofs of concept, but that “making actual pieces of DNA that store this information is probably the biggest bottleneck, at the moment. We need the reading of DNA to get faster and cheaper, and that should be possible, and other little things that make it more like the way that we’re used to working with computers.”

Due to the expense of storage and retrieval, he said, first uses would likely be for highly valued information such as bank records.

That speed and its affordability is coming closer, although not as fast as researchers would prefer.

Milenkovic and her colleagues were originally interested in DNA-based data storage because they are specialists in coding theory, which in turn enables operative data formatting. That led them to pioneer random-access storage in DNA — the capability to read information directly and without errors from where it is stored in essentially a wash of fluid. That’s like finding a minuscule needle within a mighty large haystack. To help to make that possible, Milenkovic and her colleagues developed ways to add additional sequences to stored strands of DNA with address tags — a sort of Waze for DNA storage.

Cost is the Hold-Up

Theoretically, that’s fine, Milenkovic says. But cost-wise, it remains a huge challenge, at least for now. “The biggest challenge is writing data inexpensively,” she says. One approach to reducing cost, she says, is to code information in such ways (with redundancies in coding and tagging, for instance) that errors are minimized, at the outset.

She says that such approaches are, in fact, standard practice in data storage, but the cost when it comes to DNA is that companies that synthesize DNA — that create non-biological counterparts of what is in living organisms — are not equipped to do that work economically. They are set up for other roles — primarily, synthesizing DNA for the purposes of genetic analysis. Affordably switching procedures, hardware, and software to data storage is still a way off.

(Among her and her team’s contributions is to have provided a crystal-clear brief history and technological description of DNA data storage.)

She says that technological advances relating to reading and replicating DNA are ushering in a time when using DNA as a data-detection, recording, and storage medium will become practically as well as theoretically possible — possible, and advisable.

Among those advances, she and her colleagues have noted, “researchers are now starting to sequence large quantities of fragments using nanopore technologies, which feed DNA through pores as if they were spaghetti noodles slipping through a large-holed strainer.” As DNA passes through a pore, its base pairs — its A, C, G, and T — can be rapidly read.

To facilitate reading, data can be stored in large numbers of replicas; that flood of replicas, produced at a lower and lower cost using the “polymerase chain reaction,” can then be readily read.

Such progress both gives her hope and gives her pause, she says. Pause, because “the more you work in the field, the more you realize that as usual the challenge is the cost. The technologies, the approaches for retrieving, for handling the data, they are all in place. The problem is that they are exceptionally expensive. And some steps are pretty time consuming.”

Cost is particularly an issue for academic researchers like Milenkovic who depend on grants. Large corporations like Microsoft and Technicolor, and no doubt many others, have publicized certain accomplishments, but who knows what they might have developed but kept secret for reasons of financial competition? The real state of the science cannot be fully known. Milenkovic speaks of “isolated examples where companies can afford to store a large volume of data by synthesizing it using companies like Twist, Agilent, or IDT that are pretty expensive. They probably get special deals for publicity reasons, if for nothing else.”

But “an ordinary academic user, someone like myself, has to pay a lot of money for very modest amounts of data.” That situation will likely not improve unless DNA-synthesis technologies become much simpler, faster, and less expensive.

Academic labs generally declare their findings as a requirement of grants, so they are advancing the technology while also struggling to keep up with it. Large chunks of grant money disappear into such basic costs as having DNA strands created to store data. “You store 15 images, which is roughly a few megabytes because they are not very high resolution, and you already have to pay $6,000,” says Milenkovic.

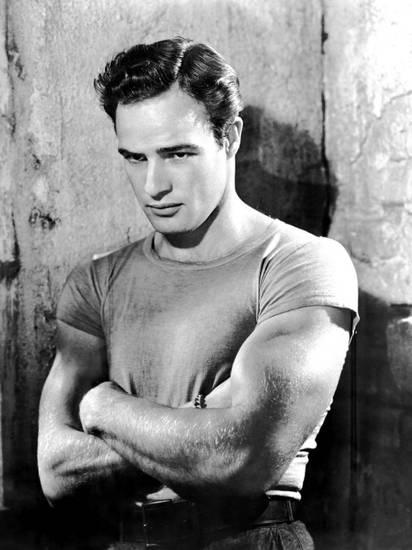

Still, she remains upbeat about the technology, and like many of those pushing it ahead, has a film-related project in mind as a demonstration of what she’d like it to be able to do. “What I really like about the DNA storage platform is that it gives you so many opportunities to do interesting things — in terms of retrieving, in-memory computing, specialized search… In-memory image processing, which is something we’re working on. Our latest project, not published yet, is to store all the movie posters of Marlon Brando, who is my big movie idol, and then try to do in-memory image processing: color-saturation changes, changes in coloring schemes, conversions of images into black-and-white images…”

For her, one holy grail is to be able to perform such in-memory processing where it would be possible to work with data without retrieving images or other stored data and reading it out before manipulating it with software. Imagine, as an example of what she means, Marlon Brando in Streetcar Named Desire in a succession of differently colored muscle shirts.

Getting there, and well ahead, will require not only more affordable processing, but also higher quality. Currently, the expensive DNA she and her colleagues order for their experiments is not of sufficient quality, so needs to be tinkered with once it’s delivered. “Very often,” she says, “some molecules are missing, some molecules are poorly synthesized, synthesis is terminated, and that’s the case for all the synthesis companies we’ve worked with.”

Her optimism about the future of the techniques is that accuracy and affordability will surely improve. Retrieval of information from synthesized, data-encoded DNA need not be a bottleneck, she says. A great deal of research in a variety of fields, not just data storage, has gone into improving capabilities. “With nanopore technologies that are being developed as part of a third generation of sequencing platforms, you can really completely mitigate the problem of low speed and long delay and low quality readout for DNA information,” she says.

Watching the moving-image legacy via DNA?

All this leads to the likelihood that the techniques and technologies will coalesce to make DNA data storage an affordable reality, even if that may take a while.

Researchers speak of the possibility of using DNA storage to “apocalypse-proof human culture” – we might almost all be fried, but at least the survivors could decode DNA repositories and watch old movies.

Of course, if their viewing options are to reflect the breadth of moving-image products that have been made to date, a lot more preservation will have to be done. While it is theoretically possible for the whole moving-image legacy to be preserved digitally, and then via DNA, it seems unlikely that anything like that will ever occur: most feature films, television programs, industrial films, home movies, and other moving-image products are unlikely ever to be digitized. For one thing, before anyone will get to most of it, it will have too badly deteriorated on the media now storing it.

But, who knows? Perhaps the advent of DNA storage or something similar will spur more and more rapid and thorough preservation efforts.

Already approaches to storage that are like DNA storage, but potentially more affordable and efficient, are emerging. Milenkovic particularly likes an approach being pioneered by Jean-Francois Lutz (left), at Institute Charles Sadron in Strasbourg. He is using synthetic polymers that are very regular and more easily manageable than DNA. Issues remain in how to read out data stored on such a platform, but the promise of the approach is real, Milenkovic says. “It’s really creative. It’s very clean, and intelligently designed.” It’s not as flashy as using DNA, she says, but it could use nanopore readers to jump ahead of DNA storage in feasibility.

Already approaches to storage that are like DNA storage, but potentially more affordable and efficient, are emerging. Milenkovic particularly likes an approach being pioneered by Jean-Francois Lutz (left), at Institute Charles Sadron in Strasbourg. He is using synthetic polymers that are very regular and more easily manageable than DNA. Issues remain in how to read out data stored on such a platform, but the promise of the approach is real, Milenkovic says. “It’s really creative. It’s very clean, and intelligently designed.” It’s not as flashy as using DNA, she says, but it could use nanopore readers to jump ahead of DNA storage in feasibility.

For the moment, DNA has the lead. No article about using the blueprint of life for storage would be complete without specifying that what technologists are using is not biological DNA; rather, it is synthetic, purpose-built DNA, removed from any biological role, and in fact incapable of it.

That should allay the concerns of those who fear sudden contagion of the planet with fungus-like infestations of snippets of I Love Lucy or Planet of the Apes or telecasts of a presidential debate.

Previous Post: Calling All Believers

Next Post: Library of Congress Launches Its National Screening Room